As part of revamping its academic program review policy, the Council contracted with Gray Associates to develop a more data-informed, effective program review methodology that provides campuses with consistent, detailed information to help guide internal decisions about program needs and improvement. Below are the detailed components and timeline Gray Associates is using to create that methodology, and the expected outcome.

Components of the process

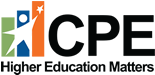

Demand analysis (program evaluation)

Student demand

Gray uses three sources to track the volume and growth of student interest by program:

- Inquiries: Gray has developed a database of over 70 million program inquiries location, degree level, and modality (online or on-campus). This information is updated quarterly.

- Google Search Volume: Gray tracks approximately 20 keywords, by county, for each of the 200 largest programs which represent over 80% of all program completions. This data is updated monthly.

- IPEDS Completions: Gray includes completions by program as reported by all Title IV institutions to the Integrated Postsecondary Education Data System (IPEDS). This data is updated annually and currently reflects 2018 completions.

Employment

Gray uses several sources to understand employment opportunities for graduates and the degrees and skills that employers seek. We have enhanced the Bureau of Labor Statistics total occupational employment information by more accurately representing the jobs graduates actually get. For example, Gray’s data highlights hundreds of occupations that Liberal Arts graduates go into that are often not represented in BLS employment data. This alignment of programs and jobs is based on three sources of information:

- American Community Survey (ACS): Using data from two million Bachelor’s degree respondents, Gray provides employment information and career path mapping. This information allows universities to better understand employment potential and advanced degree attainment.

- The Bureau of Labor Statistics (BLS): provides the total number of people employed in a field, historical employment trends, and a 10-year forecast. This data is updated annually and currently reflects the 2018 survey.

- Job Postings: Gray analyzes job postings and calculates the total number of job postings by occupation, program, location, and degree level requested. This data is updated quarterly.

Competition

Gray uses IPEDS to determine the number of competitors in a market. The company also provides data on completions per capita (a measure of market saturation) and marketing costs, including Google cost-per-click and the average cost per inquiry. For every program, they also include national distance education competition.

Degree level

Gray provides data on completions and employment by degree award level.

Gray will work with each of the universities to ensure users are trained and understand the data and its limitations. Gray will also customize program scoring rubrics to align with institutional priorities. Once these rubrics are established, over 1,400 IPEDS programs (CIP codes) are scored and ranked. The system also generates a one-page scorecard for each program that includes over 50 variables, broken into the four categories listed above.

To learn more about program evaluation, watch a video presentation.

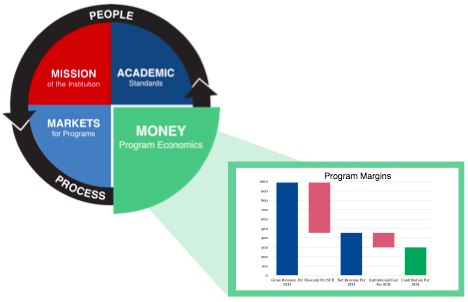

Contribution analysis (program economics)

In addition to data on program markets, universities need to understand program margins. Margin analysis enables universities to use high-margin programs to subsidize lower-margin, mission-critical programs and other activities.

For this purpose, a program is the sum of all the courses, inside and outside the department, taken by students in a major. Gray calculates variable margin (revenue minus direct instructional cost) and does not include overhead, because overheads are not usually affected by program decisions.

Gray’s Program Economics platform tracks gross revenue, institutional scholarships and grants, instructional cost, and margins. We assemble this data by student and course. This allows universities to see financial results by program, course, location, and student segment. To protect privacy, all student and faculty identities are encrypted before they are given to Gray.

To learn more about Program Economics, watch a video presentation.

Workshops

Gray will facilitate campus workshops to share the cost and demand analyses with faculty and administrators.

- Gray has a recommended list of attendees for the workshop. However, each campus should determine the appropriate composition for its workshop.

- In combination with other data and internal reviews, the Gray analyses can help campus leaders determine whether new programs should be offered, which programs should be modified, which programs should be sustained as is, and whether some programs should be closed or suspended.

Institutions should take the results of the workshop and Gray Associates’ analyses and use them in conjunction with any other data and internal program review analyses that the campus currently uses.

Final report

Institutions will submit a final report to CPE by June 30, 2020 that includes:

- A summary of the institution’s program review efforts;

- A summary of the analyses and discussions from the campus workshop; and

- A list of programs to be included in the Start, Sustain, Fix/Grow, and Stop categories and a rationale for each decision.

While the Gray Associates analysis and other campus data sources may lead decisionmakers to recommend that some programs be closed, the decision to do so will originate with the institution in keeping with the expectations of institutional governance and SACSCOC guidelines.

CPE staff review

CPE is expected to aggregate and review higher education efforts statewide for context and trends that extend beyond each institution’s sphere of influence. CPE will not compare individual programs but will look for patterns in the data to determine opportunities for collaboration, for instance. CPE will aggregate data from the institutional reviews to gain insight regarding state-level direction and strategic planning. Discussions will occur with each institution regarding its role in those efforts.

Presentation to the Council

An overarching summary of the findings will be presented to the Council. If requested by CPE members, institutional leaders may be asked to make presentations. This report and any requested presentations will become part of the public record.

Timeline

All elements of the process should be complete and a final report submitted by June 30, 2020.

Expected outcome

During this academic year, CPE staff will work with campus leaders, with input from Gray Associates, to determine a revised program review policy that is informed by this year’s work and will best reflect the criteria that are statutorily outlined in KRS 164.020 (16).